A practical roadmap to guide learning health systems

Claire Allen (right), lead author of a new paper from our Learning Health System Program, and co-author Kayne Mettert

Claire Allen shares how a new paper from our LHS Program can help learning health systems move from concept to reality

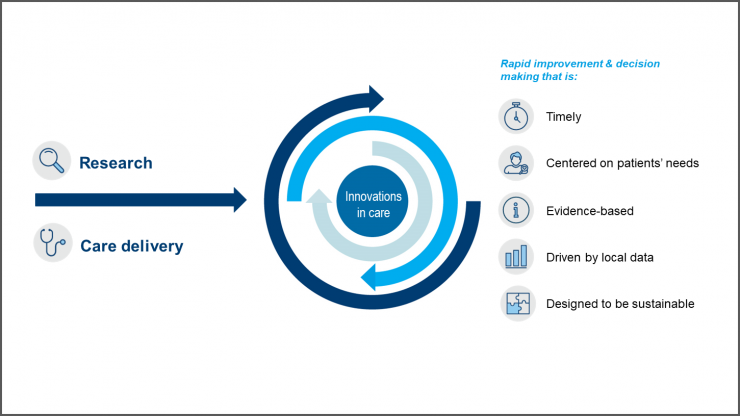

In a learning health system (LHS), knowledge from internal data and experience is combined with external evidence and routinely put into practice to improve care and patient health. But how does a health care organization create a successful LHS and measure its impact? A recent paper led by Claire Allen, a senior implementation and evaluation associate at Kaiser Permanente Washington Health Research Institute (KPWHRI), answers these important questions — providing a roadmap for understanding the core components of an LHS, how they relate to one another when operationalized in practice, and how to measure their success. Published online in the journal Learning Health Systems, the paper draws extensively from the authors’ collective experience leading the Kaiser Permanente Washington Learning Health System Program since it was established in 2017. We talked with Ms. Allen about this paper’s significance and how it can benefit other health systems.

Why did you and the KP Washington LHS Program team write this paper?

We wanted to help organizations interested in establishing an LHS by giving them practical and concrete guidance on how to operationalize and evaluate LHS activities. Our team found that the existing LHS literature was focused on theoretical concepts like partnership, patient engagement, and other ideas that can be hard to define and implement. We wanted to be specific about how to apply those concepts in the real world — and how to measure them.

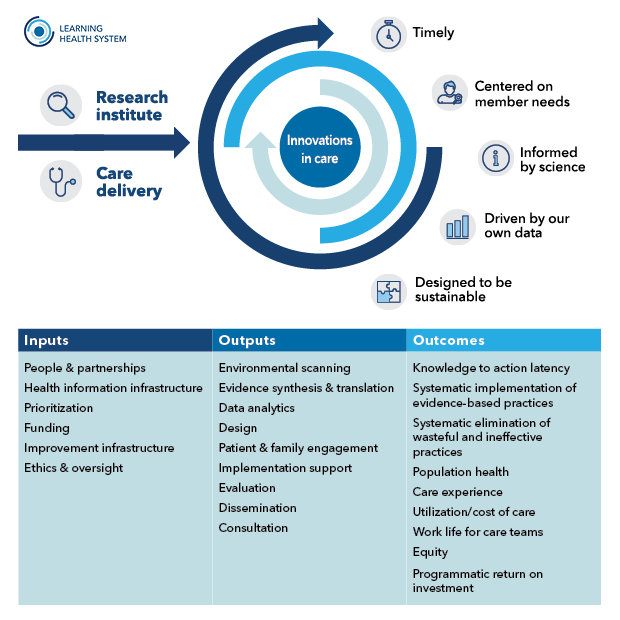

When I joined the LHS Program at KP Washington a couple years ago, the team had already identified the core constructs of an LHS, but they hadn’t yet clarified how they all relate to one another or how to measure them. I have an interest in measuring program effectiveness, so I took the lead on putting those core constructs together in a model that explains each one in relationship to LHS operations and provides measures for evaluating them. The model identifies essential elements for establishing an LHS (the inputs), clarifies specific LHS operational activities (the outputs), and defines key outcomes to evaluate processes and impact of an LHS (the outcomes).

How can a health system use this new model to launch or improve an LHS?

For a health system that wants to create an LHS, an important first step would be to look at the inputs — the key ingredients that you need to get started, such as funding to support the work and a health information infrastructure that can provide relevant data to the people who need it for decision-making. Looking at those inputs and using the measures in the model to evaluate current state would give an organization a concrete way to assess its readiness for launching an LHS.

Health systems that already have an LHS might be most interested in looking at the outputs and the outcomes — the key activities an LHS should engage in and how you measure their impact. This could provide insight into where an LHS is facing important gaps that limit its productivity or effectiveness. For example, perhaps an LHS has already mastered key activities like conducting rapid literature reviews and synthesizing evidence. But maybe they haven’t yet explored patient and family engagement. Or maybe they’ve started to engage patients and families through focus groups or interviews, but they’re not sure how to evaluate those efforts.

Why is it important to evaluate specific LHS activities, such as patient engagement?

Engaging patients and families in improving care is a concept that a lot of people have been talking about for some time, and it’s a core piece of most LHS models. But if I’m a leader in a health system that wants to launch an LHS program, I could read about the concept of patient and family engagement in other organizations but still not have a clear idea of how to operationalize it.

Attaching some sample measures to a concept forces you to think about how to actually use it in practice. For patient and family engagement, for example, our model suggests measuring the number and demographics of patients and family members involved as partners and the number of projects that incorporate patient and family member input. Another important measure is to systematically document how that input is applied. It’s our responsibility as researchers and as health system leaders to close the loop and report back to patients and families about how we’ve used the knowledge and experiences they shared with us.

Tracking metrics on patient engagement is also essential for transparency and representativeness: It lets us be clear about what we’re counting as engagement and helps us ensure we have diverse perspectives that represent our patients and communities.

What are some of the specific outcomes that an LHS should aim for?

Ultimately, the key outcomes are about having a positive impact on patient health and experience of care, as well as work life for care teams. But our model also includes process measures that influence these downstream outcomes. One such measure is what we call “knowledge to action latency” — the time lag for clinical practices to adopt research evidence to improve care for patients.

The core purpose of an LHS is to accelerate evidence into practice. When information is holed up in medical journals for years, and not in the hands of doctors and care teams, it means that our patients are not benefiting from research. So, to me, knowledge to action latency is probably the most vital outcome for all LHSs to measure.

That points to another key thing that’s important to remember, which is that LHSs need to walk the talk. Transparency is essential to working together to improve care. This means being clear about LHS activities and how we’re measuring their effectiveness. It also means measuring how well we’re doing as LHS programs. We can’t very well ask health systems to do iterative evaluation for the purpose of improvement if we’re not doing that ourselves.

Ms. Allen’s co-authors on the paper, “A roadmap to operationalize and evaluate impact in a learning health system,” are KPWHRI’s Katie Coleman, Kayne Mettert, Cara Lewis, Emily Westbrook, and Paula Lozano.

Related news

Faculty shares lessons learned in implementation and de-implementation of health care practices

CATALyST scholars call for integration of health equity into and other learning health system training programs